正文

今天用scrapy爬取壁纸的时候(url:http://pic.netbian.com/4kmein...)絮叨了一些问题,记录下来,供后世探讨,以史为鉴。**

因为网站是动态渲染的,所以选择scrapy对接selenium(scrapy抓取网页的方式和requests库相似,都是直接模拟HTTP请求,而Scrapy也不能抓取JavaScript动态渲染的网页。)

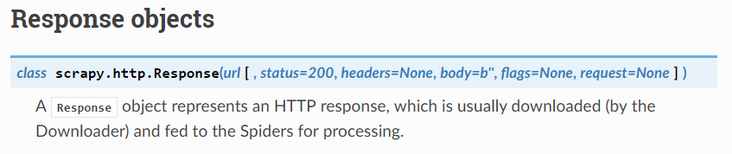

所以在Downloader Middlewares中需要得到Request并且返回一个Response,问题出在Response,通过查看官方文档发现class scrapy.http.Response(url[, status=200, headers=None, body=b'', flags=None, request=None]),随即通过from scrapy.http import Response导入Response

输入scrapy crawl girl得到如下错误:

*results=response.xpath('//[@id="main"]/div[3]/ul/lia/img')

raise NotSupported("Response content isn't text")

scrapy.exceptions.NotSupported: Response content isn't text**

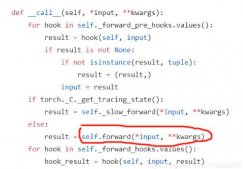

检查相关代码:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

|

# middlewares.pyfrom scrapy import signalsfrom scrapy.http import Responsefrom scrapy.exceptions import IgnoreRequestimport seleniumfrom selenium.webdriver.common.by import Byfrom selenium.webdriver.support.ui import WebDriverWaitfrom selenium.webdriver.support import expected_conditions as ECclass Pic4KgirlDownloaderMiddleware(object): # Not all methods need to be defined. If a method is not defined, # scrapy acts as if the downloader middleware does not modify the # passed objects. def process_request(self, request, spider): # Called for each request that goes through the downloader # middleware. # Must either: # - return None: continue processing this request # - or return a Response object # - or return a Request object # - or raise IgnoreRequest: process_exception() methods of # installed downloader middleware will be called try: self.browser=selenium.webdriver.Chrome() self.wait=WebDriverWait(self.browser,10) self.browser.get(request.url) self.wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '#main > div.page > a:nth-child(10)'))) return Response(url=request.url,status=200,request=request,body=self.browser.page_source.encode('utf-8')) #except: #raise IgnoreRequest() finally: self.browser.close() |

推断问题出在:

return Response(url=request.url,status=200,request=request,body=self.browser.page_source.encode('utf-8'))

查看Response类的定义

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

|

@property def text(self): """For subclasses of TextResponse, this will return the body as text (unicode object in Python 2 and str in Python 3) """ raise AttributeError("Response content isn't text") def css(self, *a, **kw): """Shortcut method implemented only by responses whose content is text (subclasses of TextResponse). """ raise NotSupported("Response content isn't text") def xpath(self, *a, **kw): """Shortcut method implemented only by responses whose content is text (subclasses of TextResponse). """ raise NotSupported("Response content isn't text") |

说明Response类不可以被直接使用,需要被继承重写方法后才能使用

响应子类

|

1

2

3

4

5

6

|

**TextResponse对象**class scrapy.http.TextResponse(url[, encoding[, ...]])**HtmlResponse对象**class scrapy.http.HtmlResponse(url[, ...])**XmlResponse对象**class scrapy.http.XmlResponse(url [,... ] ) |

举例观察TextResponse的定义from scrapy.http import TextResponse

导入TextResponse发现

|

1

2

3

4

5

6

7

8

|

class TextResponse(Response): _DEFAULT_ENCODING = 'ascii' def __init__(self, *args, **kwargs): self._encoding = kwargs.pop('encoding', None) self._cached_benc = None self._cached_ubody = None self._cached_selector = None super(TextResponse, self).__init__(*args, **kwargs) |

其中xpath方法已经被重写

|

1

2

3

4

5

6

7

8

9

10

|

@property def selector(self): from scrapy.selector import Selector if self._cached_selector is None: self._cached_selector = Selector(self) return self._cached_selector def xpath(self, query, **kwargs): return self.selector.xpath(query, **kwargs) def css(self, query): return self.selector.css(query) |

所以用户想要调用Response类,必须选择调用其子类,并且重写部分方法

Scrapy爬虫入门教程十一 Request和Response(请求和响应)

scrapy文档:https://doc.scrapy.org/en/lat...

以上就是Scrapy爬虫Response子类在应用中的问题解析的详细内容,更多关于Scrapy爬虫Response子类应用的资料请关注服务器之家其它相关文章!

原文链接:https://segmentfault.com/a/1190000018449717