1.读取csv数据做dbscan分析

读取csv文件中相应的列,然后进行转化,处理为本算法需要的格式,然后进行dbscan运算,目前公开的代码也比较多,本文根据公开代码修改,

具体代码如下:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

|

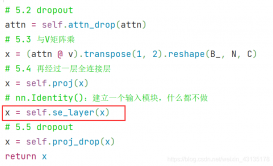

from sklearn import datasetsimport numpy as npimport randomimport matplotlib.pyplot as pltimport timeimport copyimport pandas as pd# from sklearn.datasets import load_iris def find_neighbor(j, x, eps): N = list() for i in range(x.shape[0]): temp = np.sqrt(np.sum(np.square(x[j] - x[i]))) # 计算欧式距离 if temp <= eps: N.append(i) return set(N) def DBSCAN(X, eps, min_Pts): k = -1 neighbor_list = [] # 用来保存每个数据的邻域 omega_list = [] # 核心对象集合 gama = set([x for x in range(len(X))]) # 初始时将所有点标记为未访问 cluster = [-1 for _ in range(len(X))] # 聚类 for i in range(len(X)): neighbor_list.append(find_neighbor(i, X, eps)) if len(neighbor_list[-1]) >= min_Pts: omega_list.append(i) # 将样本加入核心对象集合 omega_list = set(omega_list) # 转化为集合便于操作 while len(omega_list) > 0: gama_old = copy.deepcopy(gama) j = random.choice(list(omega_list)) # 随机选取一个核心对象 k = k + 1 Q = list() Q.append(j) gama.remove(j) while len(Q) > 0: q = Q[0] Q.remove(q) if len(neighbor_list[q]) >= min_Pts: delta = neighbor_list[q] & gama deltalist = list(delta) for i in range(len(delta)): Q.append(deltalist[i]) gama = gama - delta Ck = gama_old - gama Cklist = list(Ck) for i in range(len(Ck)): cluster[Cklist[i]] = k omega_list = omega_list - Ck return cluster # X = load_iris().datadata = pd.read_csv("testdata.csv")x,y=data['Time (sec)'],data['Height (m HAE)']print(type(x))n=len(x)x=np.array(x)x=x.reshape(n,1)y=np.array(y)y=y.reshape(n,1)X = np.hstack((x, y))cluster_std=[[.1]], random_state=9) eps = 0.08min_Pts = 5begin = time.time()C = DBSCAN(X, eps, min_Pts)end = time.time()plt.figure()plt.scatter(X[:, 0], X[:, 1], c=C)plt.show() |

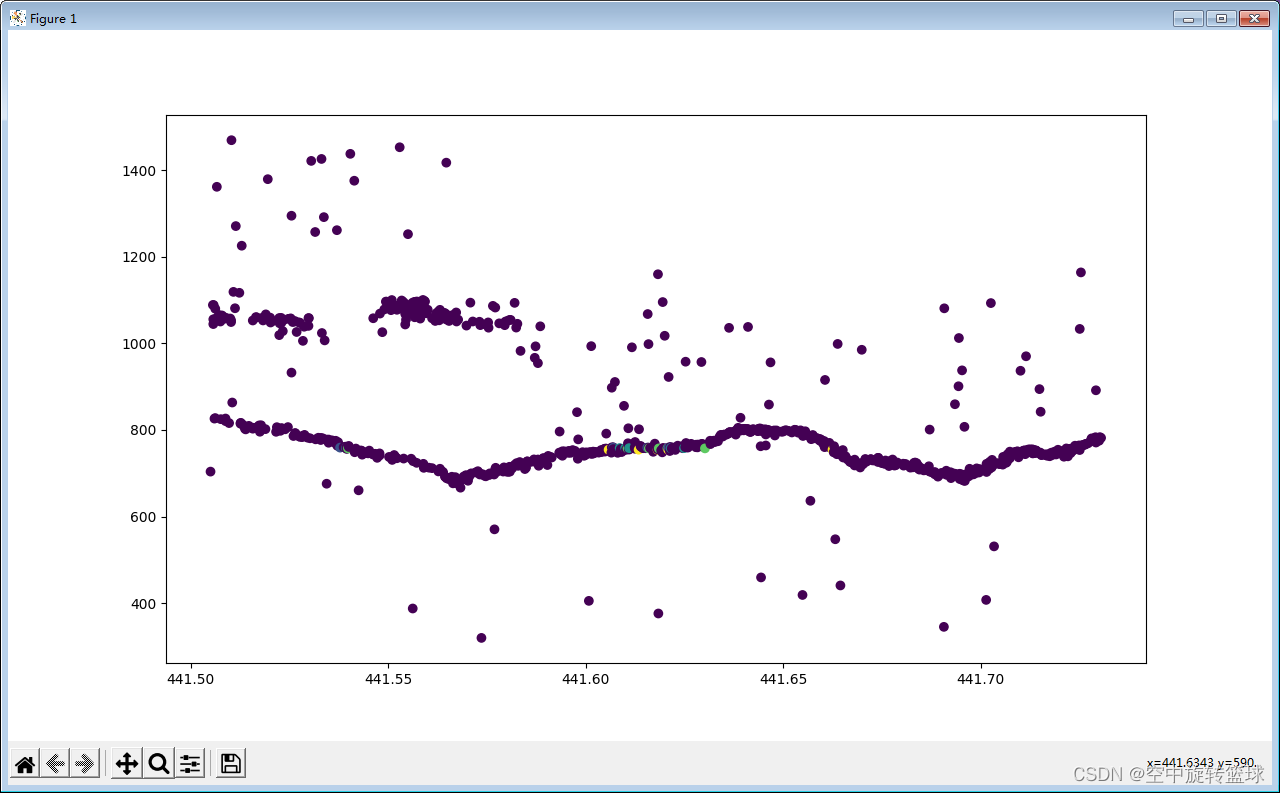

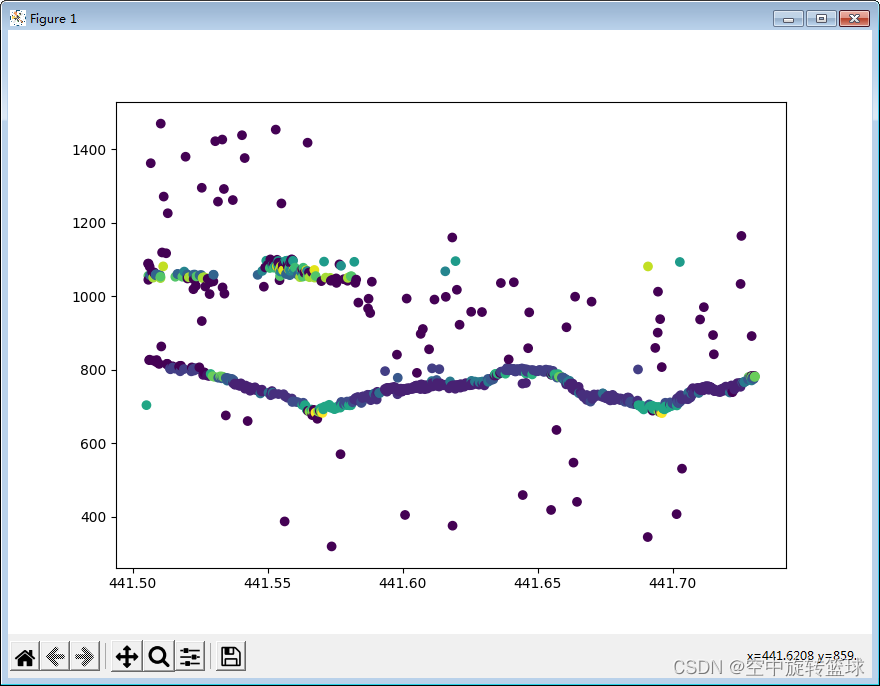

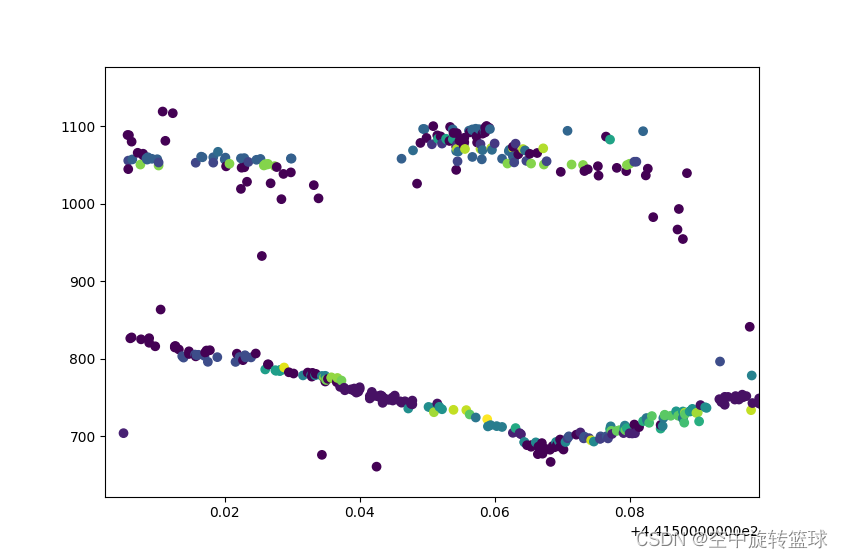

2.输出结果显示

修改参数显示:

|

1

2

|

eps = 0.8min_Pts = 5 |

3.计算效率

采用少量数据计算的时候效率问题不明显,随着数据量增大,计算效率问题就变得尤为明显,难以满足大量数据的计算需求了,后期将想办法优化计算方法或者收集C++代码进行优化了。

到此这篇关于Python取读csv文件做dbscan分析的文章就介绍到这了,更多相关Python dbscan分析内容请搜索服务器之家以前的文章或继续浏览下面的相关文章希望大家以后多多支持服务器之家!

原文链接:https://blog.csdn.net/soderayer/article/details/124089170