下面是出现的错误解释

RuntimeError:

An attempt has been made to start a new process before the

current process has finished its bootstrapping phase.

This probably means that you are not using fork to start your

child processes and you have forgotten to use the proper idiom

in the main module:

if __name__ == '__main__':

freeze_support()

...

The "freeze_support()" line can be omitted if the program

is not going to be frozen to produce an executable.

下面是出现错误代码的原代码

import multiprocessing as mp

import time

from urllib.request import urlopen,urljoin

from bs4 import BeautifulSoup

import re

base_url = "https://morvanzhou.github.io/"

#crawl爬取网页

def crawl(url):

response = urlopen(url)

time.sleep(0.1)

return response.read().decode()

#parse解析网页

def parse(html):

soup = BeautifulSoup(html,'html.parser')

urls = soup.find_all('a',{"href":re.compile('^/.+?/$')})

page_urls = set([urljoin(base_url,url['href'])for url in urls])

url = soup.find('meta',{'property':"og:url"})['content']

return title,page_urls,url

unseen = set([base_url])

seen = set()

restricted_crawl = True

pool = mp.Pool(4)

count, t1 = 1, time.time()

while len(unseen) != 0: # still get some url to visit

if restricted_crawl and len(seen) > 20:

break

print('\nDistributed Crawling...')

crawl_jobs = [pool.apply_async(crawl, args=(url,)) for url in unseen]

htmls = [j.get() for j in crawl_jobs] # request connection

print('\nDistributed Parsing...')

parse_jobs = [pool.apply_async(parse, args=(html,)) for html in htmls]

results = [j.get() for j in parse_jobs] # parse html

print('\nAnalysing...')

seen.update(unseen) # seen the crawled

unseen.clear() # nothing unseen

for title, page_urls, url in results:

print(count, title, url)

count += 1

unseen.update(page_urls - seen) # get new url to crawl

print('Total time: %.1f s' % (time.time()-t1)) # 16 s !!!

这是修改后的正确代码

import multiprocessing as mp

import time

from urllib.request import urlopen,urljoin

from bs4 import BeautifulSoup

import re

base_url = "https://morvanzhou.github.io/"

#crawl爬取网页

def crawl(url):

response = urlopen(url)

time.sleep(0.1)

return response.read().decode()

#parse解析网页

def parse(html):

soup = BeautifulSoup(html,'html.parser')

urls = soup.find_all('a',{"href":re.compile('^/.+?/$')})

src="/uploads/allimg/230215/1400132V6-0.png" />

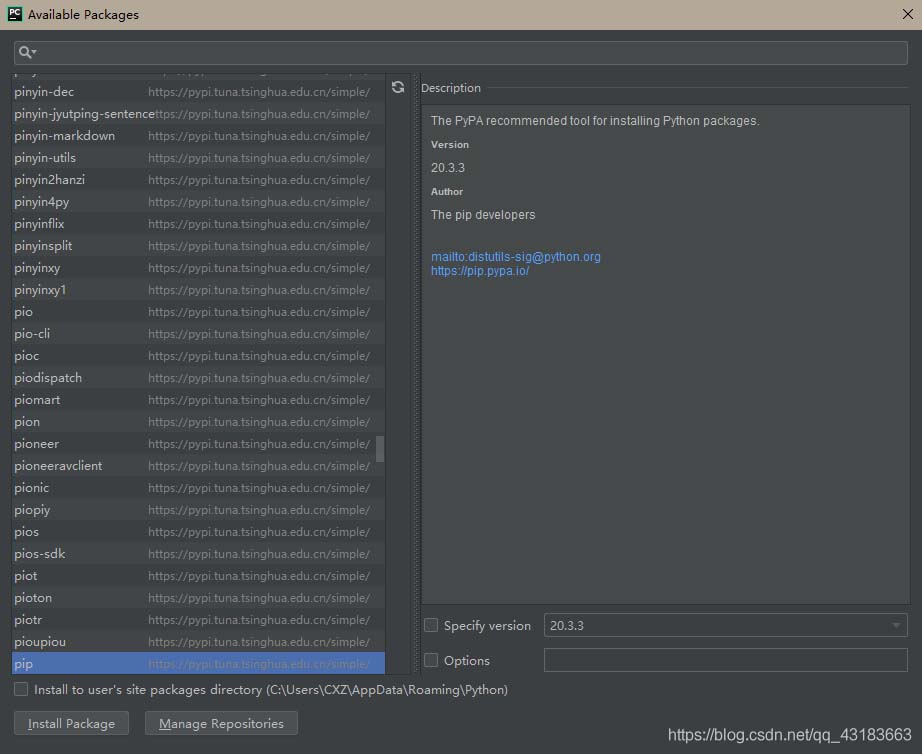

2.双击numpy修改其版本:

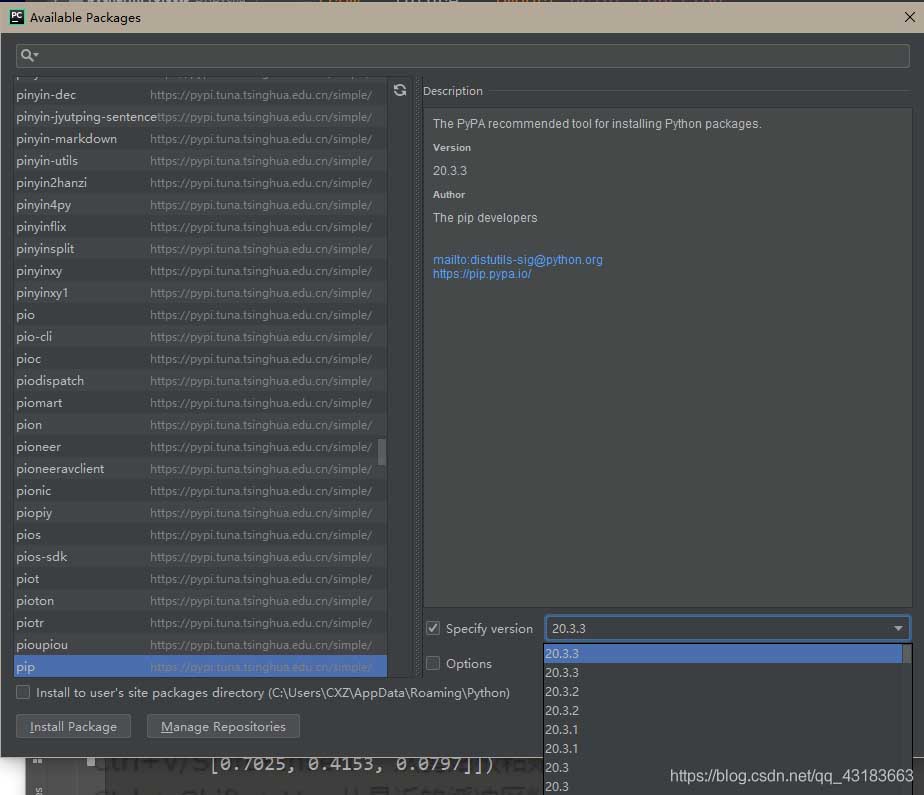

3.勾选才能修改版本,将需要的低版本导入即可:

弄完了之后,重新运行就好。

以上为个人经验,希望能给大家一个参考,也希望大家多多支持服务器之家。

原文链接:https://blog.csdn.net/weixin_42099082/article/details/89365643