前言

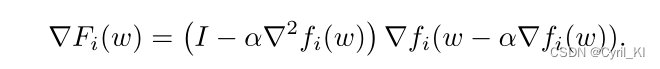

在实现Per-FedAvg的代码时,遇到如下问题:

可以发现,我们需要求损失函数对模型参数的Hessian矩阵。

模型定义

我们定义一个比较简单的模型:

|

1

2

3

4

5

6

7

8

9

10

11

12

|

class ANN(nn.Module): def __init__(self): super(ANN, self).__init__() self.sigmoid = nn.Sigmoid() self.fc1 = nn.Linear(3, 4) self.fc2 = nn.Linear(4, 5) def forward(self, data): x = self.fc1(data) x = self.fc2(x) return x |

输出一下模型的参数:

|

1

2

3

|

model = ANN()for param in model.parameters(): print(param.size()) |

输出如下:

|

1

2

3

4

|

torch.Size([4, 3])torch.Size([4])torch.Size([5, 4])torch.Size([5]) |

求解Hessian矩阵

我们首先定义数据:

|

1

2

3

4

5

|

data = torch.tensor([1, 2, 3], dtype=torch.float)label = torch.tensor([1, 1, 5, 7, 8], dtype=torch.float)pred = model(data)loss_fn = nn.MSELoss()loss = loss_fn(pred, label) |

然后求解一阶梯度:

|

1

|

grads = torch.autograd.grad(loss, model.parameters(), retain_graph=True, create_graph=True) |

输出一下grads:

|

1

2

3

4

5

6

7

8

9

|

(tensor([[-1.0530, -2.1059, -3.1589], [ 2.3615, 4.7229, 7.0844], [-1.5046, -3.0093, -4.5139], [-2.0272, -4.0543, -6.0815]], grad_fn=<TBackward0>), tensor([-1.0530, 2.3615, -1.5046, -2.0272], grad_fn=<SqueezeBackward1>), tensor([[ 0.2945, -0.2725, -0.8159, -0.6720], [ 0.1936, -0.1791, -0.5362, -0.4416], [ 1.0800, -0.9993, -2.9918, -2.4641], [ 1.3448, -1.2444, -3.7255, -3.0683], [ 1.2436, -1.1507, -3.4450, -2.8373]], grad_fn=<TBackward0>), tensor([-0.6045, -0.3972, -2.2165, -2.7600, -2.5522], grad_fn=<MseLossBackwardBackward0>)) |

可以发现一共4个Tensor,分别为损失函数对四个参数Tensor(两层,每层都有权重和偏置)的梯度。

然后针对每一个Tensor求解二阶梯度:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

|

hessian_params = [] for k in range(len(grads)): hess_params = torch.zeros_like(grads[k]) for i in range(grads[k].size(0)): # 判断是w还是b if len(grads[k].size()) == 2: # w for j in range(grads[k].size(1)): hess_params[i, j] = torch.autograd.grad(grads[k][i][j], model.parameters(), retain_graph=True)[k][i, j] else: # b hess_params[i] = torch.autograd.grad(grads[k][i], model.parameters(), retain_graph=True)[k][i] hessian_params.append(hess_params) |

这里需要注意:由于模型一共两层,每一层都有权重和偏置,其中权重参数为二维,偏置参数为一维,在进行具体的二阶梯度求导时,需要进行判断。

最终得到的hessian_params是一个列表,列表中包含四个Tensor,对应损失函数对两层网络权重和偏置的二阶梯度。

以上就是PyTorch计算损失函数对模型参数的Hessian矩阵示例的详细内容,更多关于PyTorch计算损失函数Hessian矩阵的资料请关注服务器之家其它相关文章!

原文链接:https://blog.csdn.net/Cyril_KI/article/details/124562109