什么是MobileNetV2模型

MobileNet它哥MobileNetV2也是很不错的呢

MobileNet模型是Google针对手机等嵌入式设备提出的一种轻量级的深层神经网络,其使用的核心思想便是depthwise separable convolution。

MobileNetV2是MobileNet的升级版,它具有两个特征点:

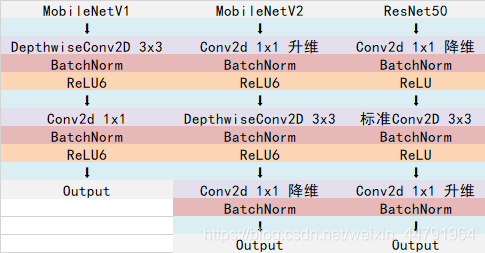

1、Inverted residuals,在ResNet50里我们认识到一个结构,bottleneck design结构,在3x3网络结构前利用1x1卷积降维,在3x3网络结构后,利用1x1卷积升维,相比直接使用3x3网络卷积效果更好,参数更少,先进行压缩,再进行扩张。而在MobileNetV2网络部分,其采用Inverted residuals结构,在3x3网络结构前利用1x1卷积升维,在3x3网络结构后,利用1x1卷积降维,先进行扩张,再进行压缩。

2、Linear bottlenecks,为了避免Relu对特征的破坏,在在3x3网络结构前利用1x1卷积升维,在3x3网络结构后,再利用1x1卷积降维后,不再进行Relu6层,直接进行残差网络的加法。

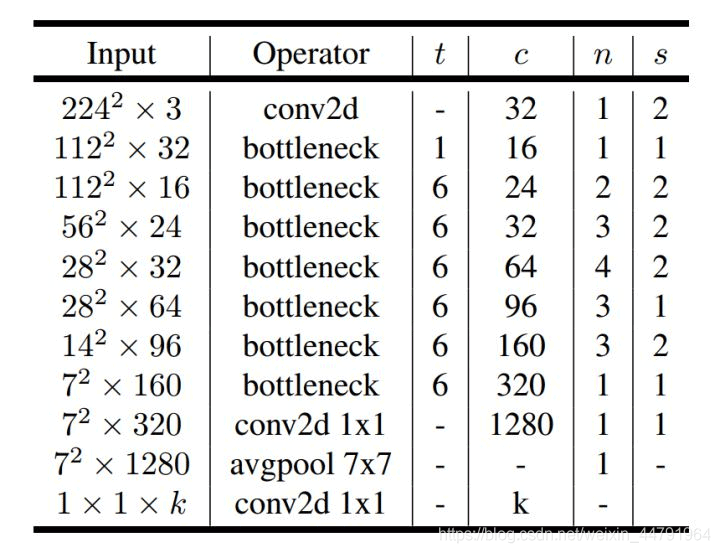

整体网络结构如下:(其中bottleneck进行的操作就是上述的创新操作)

MobileNetV2网络部分实现代码

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

|

#-------------------------------------------------------------## MobileNetV2的网络部分#-------------------------------------------------------------#import mathimport numpy as npimport tensorflow as tffrom tensorflow.keras import backendfrom keras import backend as Kfrom keras.preprocessing import imagefrom keras.models import Modelfrom keras.layers.normalization import BatchNormalizationfrom keras.layers import Conv2D, Add, ZeroPadding2D, GlobalAveragePooling2D, Dropout, Densefrom keras.layers import MaxPooling2D,Activation,DepthwiseConv2D,Input,GlobalMaxPooling2Dfrom keras.applications import imagenet_utilsfrom keras.applications.imagenet_utils import decode_predictionsfrom keras.utils.data_utils import get_file# TODO Change path to v1.1BASE_WEIGHT_PATH = ('https://github.com/JonathanCMitchell/mobilenet_v2_keras/' 'releases/download/v1.1/')# relu6!def relu6(x): return K.relu(x, max_value=6)# 用于计算padding的大小def correct_pad(inputs, kernel_size): img_dim = 1 input_size = backend.int_shape(inputs)[img_dim:(img_dim + 2)] if isinstance(kernel_size, int): kernel_size = (kernel_size, kernel_size) if input_size[0] is None: adjust = (1, 1) else: adjust = (1 - input_size[0] % 2, 1 - input_size[1] % 2) correct = (kernel_size[0] // 2, kernel_size[1] // 2) return ((correct[0] - adjust[0], correct[0]), (correct[1] - adjust[1], correct[1]))# 使其结果可以被8整除,因为使用到了膨胀系数αdef _make_divisible(v, divisor, min_value=None): if min_value is None: min_value = divisor new_v = max(min_value, int(v + divisor / 2) // divisor * divisor) if new_v < 0.9 * v: new_v += divisor return new_vdef MobileNetV2(input_shape=[224,224,3], alpha=1.0, include_top=True, weights='imagenet', classes=1000): rows = input_shape[0] img_input = Input(shape=input_shape) # stem部分 # 224,224,3 -> 112,112,32 first_block_filters = _make_divisible(32 * alpha, 8) x = ZeroPadding2D(padding=correct_pad(img_input, 3), name='Conv1_pad')(img_input) x = Conv2D(first_block_filters, kernel_size=3, strides=(2, 2), padding='valid', use_bias=False, name='Conv1')(x) x = BatchNormalization(epsilon=1e-3, momentum=0.999, name='bn_Conv1')(x) x = Activation(relu6, name='Conv1_relu')(x) # 112,112,32 -> 112,112,16 x = _inverted_res_block(x, filters=16, alpha=alpha, stride=1, expansion=1, block_id=0) # 112,112,16 -> 56,56,24 x = _inverted_res_block(x, filters=24, alpha=alpha, stride=2, expansion=6, block_id=1) x = _inverted_res_block(x, filters=24, alpha=alpha, stride=1, expansion=6, block_id=2) # 56,56,24 -> 28,28,32 x = _inverted_res_block(x, filters=32, alpha=alpha, stride=2, expansion=6, block_id=3) x = _inverted_res_block(x, filters=32, alpha=alpha, stride=1, expansion=6, block_id=4) x = _inverted_res_block(x, filters=32, alpha=alpha, stride=1, expansion=6, block_id=5) # 28,28,32 -> 14,14,64 x = _inverted_res_block(x, filters=64, alpha=alpha, stride=2, expansion=6, block_id=6) x = _inverted_res_block(x, filters=64, alpha=alpha, stride=1, expansion=6, block_id=7) x = _inverted_res_block(x, filters=64, alpha=alpha, stride=1, expansion=6, block_id=8) x = _inverted_res_block(x, filters=64, alpha=alpha, stride=1, expansion=6, block_id=9) # 14,14,64 -> 14,14,96 x = _inverted_res_block(x, filters=96, alpha=alpha, stride=1, expansion=6, block_id=10) x = _inverted_res_block(x, filters=96, alpha=alpha, stride=1, expansion=6, block_id=11) x = _inverted_res_block(x, filters=96, alpha=alpha, stride=1, expansion=6, block_id=12) # 14,14,96 -> 7,7,160 x = _inverted_res_block(x, filters=160, alpha=alpha, stride=2, expansion=6, block_id=13) x = _inverted_res_block(x, filters=160, alpha=alpha, stride=1, expansion=6, block_id=14) x = _inverted_res_block(x, filters=160, alpha=alpha, stride=1, expansion=6, block_id=15) # 7,7,160 -> 7,7,320 x = _inverted_res_block(x, filters=320, alpha=alpha, stride=1, expansion=6, block_id=16) if alpha > 1.0: last_block_filters = _make_divisible(1280 * alpha, 8) else: last_block_filters = 1280 # 7,7,320 -> 7,7,1280 x = Conv2D(last_block_filters, kernel_size=1, use_bias=False, name='Conv_1')(x) x = BatchNormalization(epsilon=1e-3, momentum=0.999, name='Conv_1_bn')(x) x = Activation(relu6, name='out_relu')(x) x = GlobalAveragePooling2D()(x) x = Dense(classes, activation='softmax', use_bias=True, name='Logits')(x) inputs = img_input model = Model(inputs, x, name='mobilenetv2_%0.2f_%s' % (alpha, rows)) # Load weights. if weights == 'imagenet': if include_top: model_name = ('mobilenet_v2_weights_tf_dim_ordering_tf_kernels_' + str(alpha) + '_' + str(rows) + '.h5') weight_path = BASE_WEIGHT_PATH + model_name weights_path = get_file( model_name, weight_path, cache_subdir='models') else: model_name = ('mobilenet_v2_weights_tf_dim_ordering_tf_kernels_' + str(alpha) + '_' + str(rows) + '_no_top' + '.h5') weight_path = BASE_WEIGHT_PATH + model_name weights_path = get_file( model_name, weight_path, cache_subdir='models') model.load_weights(weights_path) elif weights is not None: model.load_weights(weights) return modeldef _inverted_res_block(inputs, expansion, stride, alpha, filters, block_id): in_channels = backend.int_shape(inputs)[-1] pointwise_conv_filters = int(filters * alpha) pointwise_filters = _make_divisible(pointwise_conv_filters, 8) x = inputs prefix = 'block_{}_'.format(block_id) # part1 数据扩张 if block_id: # Expand x = Conv2D(expansion * in_channels, kernel_size=1, padding='same', use_bias=False, activation=None, name=prefix + 'expand')(x) x = BatchNormalization(epsilon=1e-3, momentum=0.999, name=prefix + 'expand_BN')(x) x = Activation(relu6, name=prefix + 'expand_relu')(x) else: prefix = 'expanded_conv_' if stride == 2: x = ZeroPadding2D(padding=correct_pad(x, 3), name=prefix + 'pad')(x) # part2 可分离卷积 x = DepthwiseConv2D(kernel_size=3, strides=stride, activation=None, use_bias=False, padding='same' if stride == 1 else 'valid', name=prefix + 'depthwise')(x) x = BatchNormalization(epsilon=1e-3, momentum=0.999, name=prefix + 'depthwise_BN')(x) x = Activation(relu6, name=prefix + 'depthwise_relu')(x) # part3压缩特征,而且不使用relu函数,保证特征不被破坏 x = Conv2D(pointwise_filters, kernel_size=1, padding='same', use_bias=False, activation=None, name=prefix + 'project')(x) x = BatchNormalization(epsilon=1e-3, momentum=0.999, name=prefix + 'project_BN')(x) if in_channels == pointwise_filters and stride == 1: return Add(name=prefix + 'add')([inputs, x]) return x |

图片预测

建立网络后,可以用以下的代码进行预测。

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

def preprocess_input(x): x /= 255. x -= 0.5 x *= 2. return xif __name__ == '__main__': model = MobileNetV2(input_shape=(224, 224, 3)) model.summary() img_path = 'elephant.jpg' img = image.load_img(img_path, target_size=(224, 224)) x = image.img_to_array(img) x = np.expand_dims(x, axis=0) x = preprocess_input(x) print('Input image shape:', x.shape) preds = model.predict(x) print(np.argmax(preds)) print('Predicted:', decode_predictions(preds, 1)) |

预测所需的已经训练好的MobileNetV2模型会在运行时自动下载,下载后的模型位于C:\Users\Administrator.keras\models文件夹内。

可以修改MobileNetV2内不同的alpha值实现不同depth的MobileNetV2模型。可选的alpha值有:

| Top-1 | Top-5 | 10-5 | Size | Stem | |

|---|---|---|---|---|---|

| MobileNetV2(alpha=0.35) | 39.914 | 17.568 | 15.422 | 1.7M | 0.4M |

| MobileNetV2(alpha=0.50) | 34.806 | 13.938 | 11.976 | 2.0M | 0.7M |

| MobileNetV2(alpha=0.75) | 30.468 | 10.824 | 9.188 | 2.7M | 1.4M |

| MobileNetV2(alpha=1.0) | 28.664 | 9.858 | 8.322 | 3.5M | 2.3M |

| MobileNetV2(alpha=1.3) | 25.320 | 7.878 | 6.728 | 5.4M | 3.8M |

以上就是python神经网络MobileNetV2模型的复现详解的详细内容,更多关于MobileNetV2模型复现的资料请关注服务器之家其它相关文章!

原文链接:https://blog.csdn.net/weixin_44791964/article/details/102851214