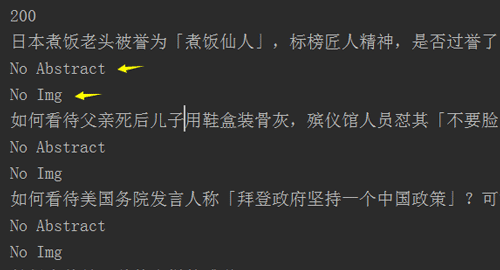

一、错误代码:摘要和详细的url获取不到

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

|

import asynciofrom bs4 import BeautifulSoupimport aiohttp headers={ 'user-agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.198 Safari/537.36', 'referer': 'https://www.baidu.com/s?tn=02003390_43_hao_pg&isource=infinity&iname=baidu&itype=web&ie=utf-8&wd=%E7%9F%A5%E4%B9%8E%E7%83%AD%E6%A6%9C'}async def getPages(url): async with aiohttp.ClientSession(headers=headers) as session: async with session.get(url) as resp: print(resp.status) # 打印状态码 html=await resp.text() soup=BeautifulSoup(html,'lxml') items=soup.select('.HotList-item') for item in items: title=item.select('.HotList-itemTitle')[0].text try: abstract=item.select('.HotList-itemExcerpt')[0].text except: abstract='No Abstract' hot=item.select('.HotList-itemMetrics')[0].text try: img=item.select('.HotList-itemImgContainer img')['src'] except: img='No Img' print("{}\n{}\n{}".format(title,abstract,img)) if __name__ == '__main__': url='https://www.zhihu.com/billboard' loop=asyncio.get_event_loop() loop.run_until_complete(getPages(url)) loop.close() |

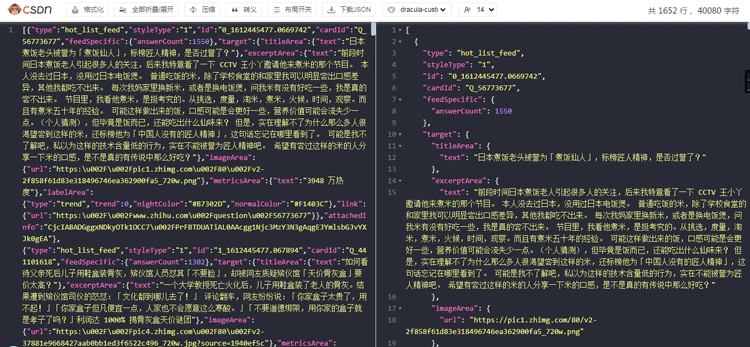

二、查看JS代码

发现详细链接、图片链接、问题摘要等都在JS里面(CSDN的开发者助手插件确实好用)

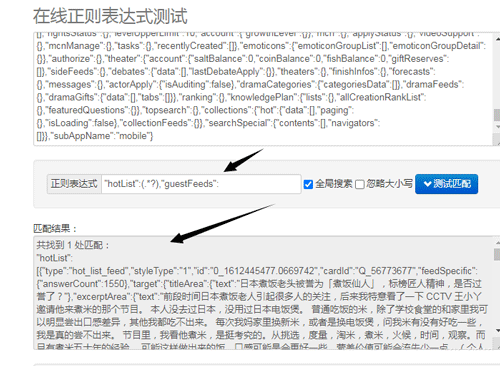

正则表达式获取上述信息:

接下来就是详细的代码啦

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

|

import asyncioimport jsonimport reimport aiohttp headers={ 'user-agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.198 Safari/537.36', 'referer': 'https://www.baidu.com/s?tn=02003390_43_hao_pg&isource=infinity&iname=baidu&itype=web&ie=utf-8&wd=%E7%9F%A5%E4%B9%8E%E7%83%AD%E6%A6%9C'}async def getPages(url): async with aiohttp.ClientSession(headers=headers) as session: async with session.get(url) as resp: print(resp.status) # 打印状态码 html=await resp.text() regex=re.compile('"hotList":(.*?),"guestFeeds":') text=regex.search(html).group(1) # print(json.loads(text)) # json换成字典格式 for item in json.loads(text): title=item['target']['titleArea']['text'] question=item['target']['excerptArea']['text'] hot=item['target']['metricsArea']['text'] link=item['target']['link']['url'] img=item['target']['imageArea']['url'] if not img: img='No Img' if not question: question='No Abstract' print("Title:{}\nPopular:{}\nQuestion:{}\nLink:{}\nImg:{}".format(title,hot,question,link,img)) if __name__ == '__main__': url='https://www.zhihu.com/billboard' loop=asyncio.get_event_loop() loop.run_until_complete(getPages(url)) loop.close() |

到此这篇关于Python异步爬取知乎热榜实例分享的文章就介绍到这了,更多相关Python异步爬取内容请搜索服务器之家以前的文章或继续浏览下面的相关文章希望大家以后多多支持服务器之家!

原文链接:https://kantlee.blog.csdn.net/article/details/113665084