bs4的安装

要使用BeautifulSoup4需要先安装lxml,再安装bs4

|

1

|

pip install lxml |

|

1

|

pip install bs4 |

使用方法:

|

1

|

from bs4 import BeautifulSoup |

lxml和bs4对比学习

|

1

2

3

|

from lxml import etreetree = etree.HTML(html)tree.xpath() |

|

1

2

|

from bs4 import BeautifulSoupsoup = BeautifulSoup(html_doc, 'lxml') |

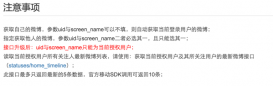

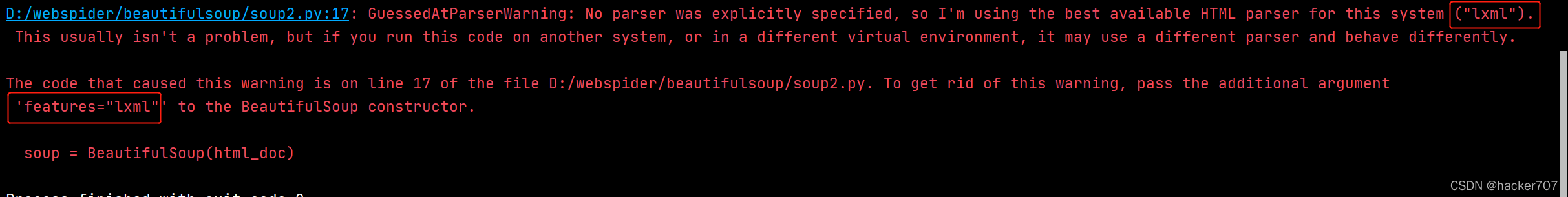

注意事项:

创建soup对象时如果不传’lxml’或者features="lxml"会出现以下警告

bs4的快速入门

解析器的比较(了解即可)

| 解析器 | 用法 | 优点 | 缺点 |

|---|---|---|---|

| python标准库 | BeautifulSoup(markup,‘html.parser’) | python标准库,执行速度适中 | (在python2.7.3或3.2.2之前的版本中)文档容错能力差 |

| lxml的HTML解析器 | BeautifulSoup(markup,‘lxml’) | 速度快,文档容错能力强 | 需要安装c语言库 |

| lxml的XML解析器 | BeautifulSoup(markup,‘lxml-xml’)或者BeautifulSoup(markup,‘xml’) | 速度快,唯一支持XML的解析器 | 需要安装c语言库 |

| html5lib | BeautifulSoup(markup,‘html5lib’) | 最好的容错性,以浏览器的方式解析文档,生成HTML5格式的文档 | 速度慢,不依赖外部扩展 |

对象种类

Tag:标签

BeautifulSoup:bs对象

NavigableString:可导航的字符串

Comment:注释

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

|

from bs4 import BeautifulSoup# 创建模拟HTML代码的字符串html_doc = """<html><head><title>The Dormouse's story</title></head><body><p class="title"><b>The Dormouse's story</b></p><p class="story">Once upon a time there were three little sisters; and their names were<a href="http://example.com/elsie" class="sister" id="link1">Elsie</a>,<a href="http://example.com/lacie" class="sister" id="link2">Lacie</a> and<a href="http://example.com/tillie" class="sister" id="link3">Tillie</a>;and they lived at the bottom of a well.</p><p class="story">...</p><span><!--comment注释内容举例--></span>"""# 创建soup对象soup = BeautifulSoup(html_doc, 'lxml')print(type(soup.title)) # <class 'bs4.element.Tag'>print(type(soup)) # <class 'bs4.BeautifulSoup'>print(type(soup.title.string)) # <class 'bs4.element.NavigableString'>print(type(soup.span.string)) # <class 'bs4.element.Comment'> |

bs4的简单使用

获取标签内容

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

|

from bs4 import BeautifulSoup# 创建模拟HTML代码的字符串html_doc = """<html><head><title>The Dormouse's story</title></head><body><p class="title"><b>The Dormouse's story</b></p><p class="story">Once upon a time there were three little sisters; and their names were<a href="http://example.com/elsie" class="sister" id="link1">Elsie</a>,<a href="http://example.com/lacie" class="sister" id="link2">Lacie</a> and<a href="http://example.com/tillie" class="sister" id="link3">Tillie</a>;and they lived at the bottom of a well.</p><p class="story">...</p></body></html>"""# 创建soup对象soup = BeautifulSoup(html_doc, 'lxml')print('head标签内容:\n', soup.head) # 打印head标签print('body标签内容:\n', soup.body) # 打印body标签print('html标签内容:\n', soup.html) # 打印html标签print('p标签内容:\n', soup.p) # 打印p标签 |

注意:在打印p标签对应的代码时,可以发现只打印了第一个p标签内容,这时我们可以通过find_all来获取p标签全部内容

|

1

|

print('p标签内容:\n', soup.find_all('p')) |

这里需要注意使用find_all里面必须传入的是字符串

获取标签名字

通过name属性获取标签名字

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

|

from bs4 import BeautifulSoup# 创建模拟HTML代码的字符串html_doc = """<html><head><title>The Dormouse's story</title></head><body><p class="title"><b>The Dormouse's story</b></p><p class="story">Once upon a time there were three little sisters; and their names were<a href="http://example.com/elsie" class="sister" id="link1">Elsie</a>,<a href="http://example.com/lacie" class="sister" id="link2">Lacie</a> and<a href="http://example.com/tillie" class="sister" id="link3">Tillie</a>;and they lived at the bottom of a well.</p><p class="story">...</p></body></html>"""# 创建soup对象soup = BeautifulSoup(html_doc, 'lxml')print('head标签名字:\n', soup.head.name) # 打印head标签名字print('body标签名字:\n', soup.body.name) # 打印body标签名字print('html标签名字:\n', soup.html.name) # 打印html标签名字print('p标签名字:\n', soup.find_all('p').name) # 打印p标签名字 |

如果要找到两个标签的内容,需要传入列表过滤器,而不是字符串过滤器

使用字符串过滤器获取多个标签内容会返回空列表

|

1

|

print(soup.find_all('title', 'p')) |

[]

需要使用列表过滤器获取多个标签内容

|

1

|

print(soup.find_all(['title', 'p'])) |

[<title>The Dormouse's story</title>, <p class="title"><b>The Dormouse's story</b></p>, <p class="story">Once upon a time there were three little sisters; and their names were

<a class="sister" href="http://example.com/elsie" id="link1">Elsie</a>,

<a class="sister" href="http://example.com/lacie" id="link2">Lacie</a> and

<a class="sister" href="http://example.com/tillie" id="link3">Tillie</a>;

and they lived at the bottom of a well.</p>, <p class="story">...</p>]

获取a标签的href属性值

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

|

from bs4 import BeautifulSoup# 创建模拟HTML代码的字符串html_doc = """<html><head><title>The Dormouse's story</title></head><body><p class="title"><b>The Dormouse's story</b></p><p class="story">Once upon a time there were three little sisters; and their names were<a href="http://example.com/elsie" class="sister" id="link1">Elsie</a>,<a href="http://example.com/lacie" class="sister" id="link2">Lacie</a> and<a href="http://example.com/tillie" class="sister" id="link3">Tillie</a>;and they lived at the bottom of a well.</p><p class="story">...</p>"""# 创建soup对象soup = BeautifulSoup(html_doc, 'lxml')a_list = soup.find_all('a')# 遍历列表取属性值for a in a_list: # 第一种方法通过get去获取href属性值(没有找到返回None) print(a.get('href')) # 第二种方法先通过attrs获取所有属性值,再提取出你想要的属性值 print(a.attrs['href']) # 第三种方法获取没有的属性值会报错 print(a['href']) |

扩展:使用prettify()美化 让节点层级关系更加明显 方便分析

|

1

|

print(soup.prettify()) |

不使用prettify时的代码

|

1

2

3

4

5

6

7

8

9

10

|

<html><head><title>The Dormouse's story</title></head><body><p class="title"><b>The Dormouse's story</b></p><p class="story">Once upon a time there were three little sisters; and their names were<a class="sister" href="http://example.com/elsie" id="link1">Elsie</a>,<a class="sister" href="http://example.com/lacie" id="link2">Lacie</a> and<a class="sister" href="http://example.com/tillie" id="link3">Tillie</a>;and they lived at the bottom of a well.</p><p class="story">...</p></body></html> |

遍历文档树

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

|

from bs4 import BeautifulSoup# 创建模拟HTML代码的字符串html_doc = """<html><head><title>The Dormouse's story</title></head><body><p class="title"><b>The Dormouse's story</b></p><p class="story">Once upon a time there were three little sisters; and their names were<a href="http://example.com/elsie" class="sister" id="link1">Elsie</a>,<a href="http://example.com/lacie" class="sister" id="link2">Lacie</a> and<a href="http://example.com/tillie" class="sister" id="link3">Tillie</a>;and they lived at the bottom of a well.</p><p class="story">...</p></body></html>"""soup = BeautifulSoup(html_doc, 'lxml')head = soup.head# contents返回的是所有子节点的列表 [<title>The Dormouse's story</title>]print(head.contents)# children返回的是一个子节点的迭代器 <list_iterator object at 0x00000264BADC2748>print(head.children)# 凡是迭代器都是可以遍历的for h in head.children: print(h)html = soup.html # 会把换行也当作子节点匹配到# descendants 返回的是一个生成器遍历子子孙孙 <generator object Tag.descendants at 0x0000018C15BFF4C8>print(html.descendants)# 凡是生成器都是可遍历的for h in html.descendants: print(h)'''需要重点掌握的string获取标签里面的内容strings 返回是一个生成器对象用过来获取多个标签内容stripped_strings 和strings基本一致 但是它可以把多余的空格去掉'''print(soup.title.string)print(soup.html.string)# 返回生成器对象<generator object Tag._all_strings at 0x000001AAFF9EF4C8># soup.html.strings 包含在html标签里面的文本都会被获取到print(soup.html.strings)for h in soup.html.strings: print(h)# stripped_strings可以把多余的空格去掉# 返回生成器对象<generator object PageElement.stripped_strings at 0x000001E31284F4C8>print(soup.html.stripped_strings)for h in soup.html.stripped_strings: print(h)'''parent直接获得父节点parents获取所有的父节点'''title = soup.title# parent找直接父节点print(title.parent)# parents获取所有父节点# 返回生成器对象<generator object PageElement.parents at 0x000001F02049F4C8>print(title.parents)for p in title.parents: print(p)# html的父节点就是整个文档print(soup.html.parent)# <class 'bs4.BeautifulSoup'>print(type(soup.html.parent)) |

案例练习

获取所有职位名称

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

|

html = """<table class="tablelist" cellpadding="0" cellspacing="0"> <tbody> <tr class="h"> <td class="l" width="374">职位名称</td> <td>职位类别</td> <td>人数</td> <td>地点</td> <td>发布时间</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=33824&keywords=python&tid=87&lid=2218">22989-金融云区块链高级研发工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=29938&keywords=python&tid=87&lid=2218">22989-金融云高级后台开发</a></td> <td>技术类</td> <td>2</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=31236&keywords=python&tid=87&lid=2218">SNG16-腾讯音乐运营开发工程师(深圳)</a></td> <td>技术类</td> <td>2</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=31235&keywords=python&tid=87&lid=2218">SNG16-腾讯音乐业务运维工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=34531&keywords=python&tid=87&lid=2218">TEG03-高级研发工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=34532&keywords=python&tid=87&lid=2218">TEG03-高级图像算法研发工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=31648&keywords=python&tid=87&lid=2218">TEG11-高级AI开发工程师(深圳)</a></td> <td>技术类</td> <td>4</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=32218&keywords=python&tid=87&lid=2218">15851-后台开发工程师</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=32217&keywords=python&tid=87&lid=2218">15851-后台开发工程师</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="odd"> <td class="l square"><a id="test" class="test" target='_blank' href="position_detail.php?id=34511&keywords=python&tid=87&lid=2218">SNG11-高级业务运维工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> </tbody></table>""" |

思路

不难看出想要的数据在tr节点的a标签里,只需要遍历所有的tr节点,从遍历出来的tr节点取a标签里面的文本数据

代码实现

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

|

from bs4 import BeautifulSouphtml = """<table class="tablelist" cellpadding="0" cellspacing="0"> <tbody> <tr class="h"> <td class="l" width="374">职位名称</td> <td>职位类别</td> <td>人数</td> <td>地点</td> <td>发布时间</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=33824&keywords=python&tid=87&lid=2218">22989-金融云区块链高级研发工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=29938&keywords=python&tid=87&lid=2218">22989-金融云高级后台开发</a></td> <td>技术类</td> <td>2</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=31236&keywords=python&tid=87&lid=2218">SNG16-腾讯音乐运营开发工程师(深圳)</a></td> <td>技术类</td> <td>2</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=31235&keywords=python&tid=87&lid=2218">SNG16-腾讯音乐业务运维工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-25</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=34531&keywords=python&tid=87&lid=2218">TEG03-高级研发工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=34532&keywords=python&tid=87&lid=2218">TEG03-高级图像算法研发工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=31648&keywords=python&tid=87&lid=2218">TEG11-高级AI开发工程师(深圳)</a></td> <td>技术类</td> <td>4</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="odd"> <td class="l square"><a target="_blank" href="position_detail.php?id=32218&keywords=python&tid=87&lid=2218">15851-后台开发工程师</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="even"> <td class="l square"><a target="_blank" href="position_detail.php?id=32217&keywords=python&tid=87&lid=2218">15851-后台开发工程师</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> <tr class="odd"> <td class="l square"><a id="test" class="test" target='_blank' href="position_detail.php?id=34511&keywords=python&tid=87&lid=2218">SNG11-高级业务运维工程师(深圳)</a></td> <td>技术类</td> <td>1</td> <td>深圳</td> <td>2017-11-24</td> </tr> </tbody></table>"""# 创建soup对象soup = BeautifulSoup(html, 'lxml')# 使用find_all()找到所有的tr节点(经过观察第一个tr节点为表头,忽略不计)tr_list = soup.find_all('tr')[1:]# 遍历tr_list取a标签里的文本数据for tr in tr_list: a_list = tr.find_all('a') print(a_list[0].string) |

运行结果如下:

22989-金融云区块链高级研发工程师(深圳)

22989-金融云高级后台开发

SNG16-腾讯音乐运营开发工程师(深圳)

SNG16-腾讯音乐业务运维工程师(深圳)

TEG03-高级研发工程师(深圳)

TEG03-高级图像算法研发工程师(深圳)

TEG11-高级AI开发工程师(深圳)

15851-后台开发工程师

15851-后台开发工程师

SNG11-高级业务运维工程师(深圳)

总结

到此这篇关于Python爬虫之BeautifulSoup基本使用的文章就介绍到这了,更多相关Python BeautifulSoup使用内容请搜索服务器之家以前的文章或继续浏览下面的相关文章希望大家以后多多支持服务器之家!

原文链接:https://blog.csdn.net/xqe777/article/details/123588660