找到detect.py,在大概113行,找到plot_one_box

|

1

2

3

4

5

6

7

8

9

10

|

# Write results for *xyxy, conf, cls in reversed(det): if save_txt: # Write to file xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4)) / gn).view(-1).tolist() # normalized xywh with open(txt_path + '.txt', 'a') as f: f.write(('%g ' * 5 + '\n') % (cls, *xywh)) # label format if save_img or view_img: # Add bbox to image label = '%s %.2f' % (names[int(cls)], conf) plot_one_box(xyxy, im0, label=label, color=colors[int(cls)], line_thickness=3) |

ctr+鼠标点击,进入general.py,并自动定位到plot_one_box函数,修改函数为

|

1

2

3

4

5

6

7

|

def plot_one_box(x, img, color=None, label=None, line_thickness=None): # Plots one bounding box on image img tl = line_thickness or round(0.002 * (img.shape[0] + img.shape[1]) / 2) + 1 # line/font thickness color = color or [random.randint(0, 255) for _ in range(3)] c1, c2 = (int(x[0]), int(x[1])), (int(x[2]), int(x[3])) cv2.rectangle(img, c1, c2, color, thickness=tl, lineType=cv2.LINE_AA) print("左上点的坐标为:(" + str(c1[0]) + "," + str(c1[1]) + "),右下点的坐标为(" + str(c2[0]) + "," + str(c2[1]) + ")") |

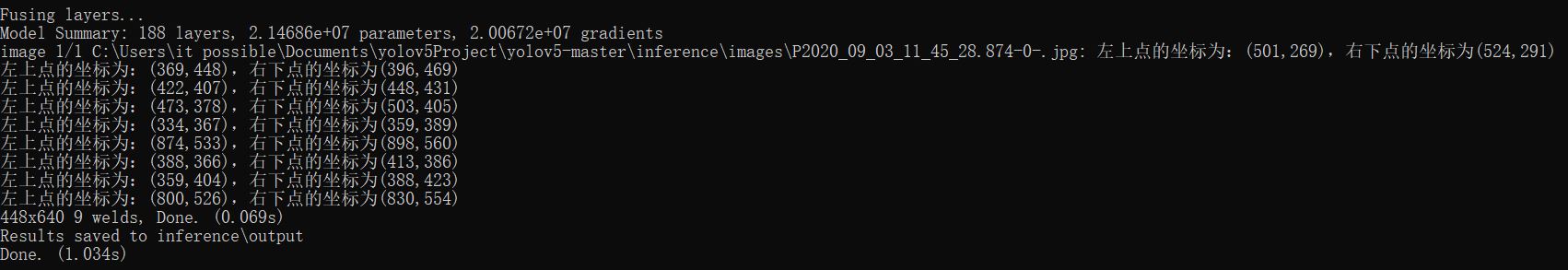

即可输出目标坐标信息了

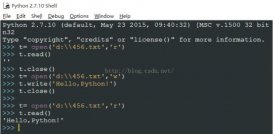

附:python yolov5检测模型返回坐标的方法实例代码

python yolov5检测模型返回坐标的方法 直接搜索以下代码替换下

|

1

2

3

4

5

|

if save_img or view_img: # Add bbox to image label = f'{names[int(cls)]} {conf:.2f}' c1, c2 = (int(xyxy[0]), int(xyxy[1])), (int(xyxy[2]), int(xyxy[3])) print("左上点的坐标为:(" + str(c1[0]) + "," + str(c1[1]) + "),右下点的坐标为(" + str(c2[0]) + "," + str(c2[1]) + ")") return [c1,c2] |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

|

if __name__ == '__main__':parser = argparse.ArgumentParser()parser.add_argument('--weights', nargs='+', type=str, default='yolov5s.pt', help='model.pt path(s)')parser.add_argument('--source', type=str, default='data/images', help='source') # file/folder, 0 for webcamparser.add_argument('--img-size', type=int, default=640, help='inference size (pixels)')parser.add_argument('--conf-thres', type=float, default=0.25, help='object confidence threshold')parser.add_argument('--iou-thres', type=float, default=0.45, help='IOU threshold for NMS')parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')parser.add_argument('--view-img', action='store_true', help='display results')parser.add_argument('--save-txt', action='store_true', help='save results to *.txt')parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels')parser.add_argument('--nosave', action='store_true', help='do not save images/videos')parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --class 0, or --class 0 2 3')parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS')parser.add_argument('--augment', action='store_true', help='augmented inference')parser.add_argument('--update', action='store_true', help='update all models')parser.add_argument('--project', default='runs/detect', help='save results to project/name')parser.add_argument('--name', default='exp', help='save results to project/name')parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')opt = parser.parse_args()check_requirements(exclude=('pycocotools', 'thop'))opt.source='data/images/1/'result=detect()print('最终检测结果:',result); |

总结

到此这篇关于如何使用yolov5输出检测到的目标坐标信息的文章就介绍到这了,更多相关yolov5输出目标坐标内容请搜索服务器之家以前的文章或继续浏览下面的相关文章希望大家以后多多支持服务器之家!

原文链接:https://blog.csdn.net/weixin_44612221/article/details/115384742