之前经常在网上看到那种由一个个字符构成的视频,非常炫酷。一直不懂是怎么做的,这两天研究了一下,发现并不难。

先来看一个最终效果(如果模糊的话,点击下方链接看高清版):

https://pan.baidu.com/s/1DvedXlDZ4dgHKLogdULogg 提取码:1234

怎么实现的?

简单来说,要将一个彩色的视频变成字符画出来的黑白视频,用下面几步就能搞定:

- 对原视频进行抽帧,对每一帧黑白化,并将像素点用对应的字符表示。

- 将表示出来的字符串再重新组合成字符图像。

- 将所有的字符图像再组合成字符视频。

- 将原视频的音频导入到新的字符视频中。

运行方法

完整的代码我放在文章末尾了,直接运行python3 video2char.py即可。程序会要求你输入视频的本地路径和转变后的清晰度(0最模糊,1最清晰。当然越清晰,转变越慢)。

运行代码的话需要用到tqdm、opencv_python、moviepy等几个库,首先得pip3 install确保它们都有了。

原理分析

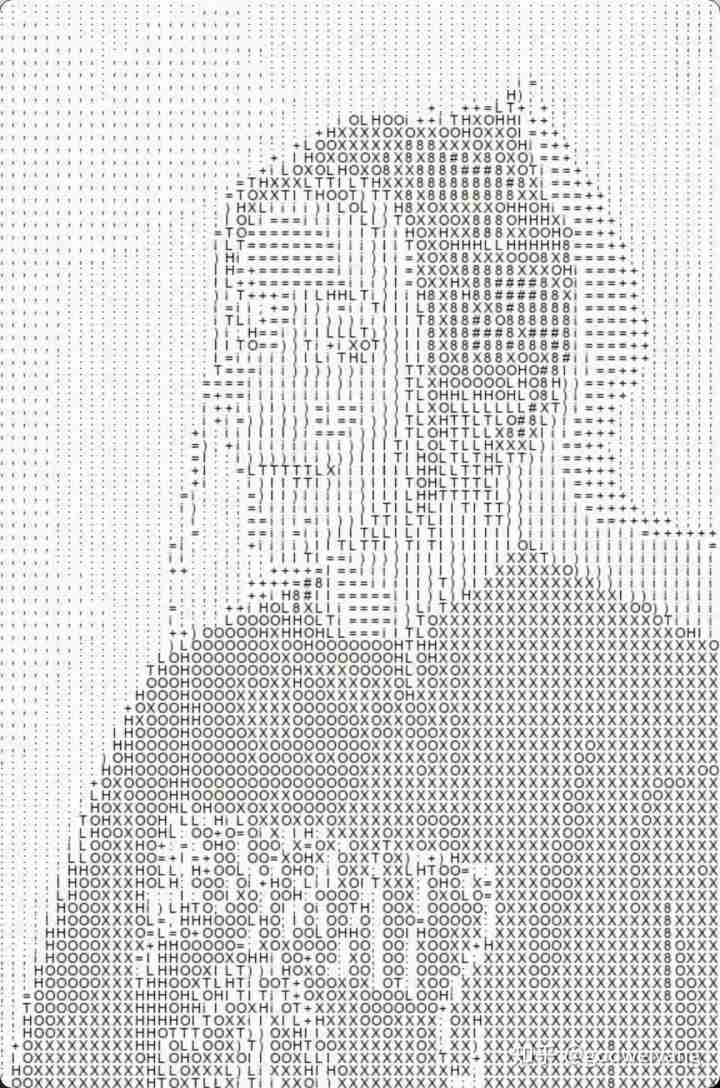

这里面最关键的步骤就是如何将一帧彩色图像转变为黑白的字符图像,如下图所示:

从青蛙公主视频抽帧出来的

用字符画出来的

而转变的原理其实很简单。首先因为一个字符画在图像里会占据很大一个像素块,所以必须先对彩色图像进行压缩,连续的一个像素块可以合并,这个压缩过程就是opencv的resize操作。

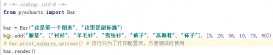

然后将压缩后的像素点转变为黑白像素点,并转变为对应的字符。字符的话我这里采用的是下面的字符串,从黑到白,经过我的实践这一组是效果最好的:

"#8XOHLTI)i=+;:,. "

接着就需要将转变后的字符画到新的画布上去,需要注意的点是排布得均匀紧凑了,画布四周最好不要有太多多余的空白。

最后把所有的字符图像合并成视频就行了,但是合并后是没有声音的,需要用moviepy库把原视频的声音导入过来。

完整代码

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

|

import osimport reimport shutilfrom tqdm import trange, tqdmimport cv2from PIL import Image, ImageFont, ImageDrawfrom moviepy.editor import VideoFileClip class V2Char: font_path = "Arial.ttf" ascii_char = "#8XOHLTI)i=+;:,. " def __init__(self, video_path, clarity): self.video_path = video_path self.clarity = clarity def video2str(self): def convert(img): if img.shape[0] > self.text_size[1] or img.shape[1] > self.text_size[0]: img = cv2.resize(img, self.text_size, interpolation=cv2.INTER_NEAREST) ascii_frame = "" for i in range(img.shape[0]): for j in range(img.shape[1]): ascii_frame += self.ascii_char[ int(img[i, j] / 256 * len(self.ascii_char)) ] return ascii_frame print("正在将原视频转为字符...") self.char_video = [] cap = cv2.VideoCapture(self.video_path) self.fps = cap.get(cv2.CAP_PROP_FPS) self.nframe = int(cap.get(cv2.CAP_PROP_FRAME_COUNT)) self.raw_width = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH)) self.raw_height = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT)) font_size = int(25 - 20 * max(min(float(self.clarity), 1), 0)) self.font = ImageFont.truetype(self.font_path, font_size) self.char_width, self.char_height = max( [self.font.getsize(c) for c in self.ascii_char] ) self.text_size = ( int(self.raw_width / self.char_width), int(self.raw_height / self.char_height), ) for _ in trange(self.nframe): raw_frame = cv2.cvtColor(cap.read()[1], cv2.COLOR_BGR2GRAY) frame = convert(raw_frame) self.char_video.append(frame) cap.release() def str2fig(self): print("正在生成字符图像...") col, row = self.text_size catalog = self.video_path.split(".")[0] if not os.path.exists(catalog): os.makedirs(catalog) blank_width = int((self.raw_width - self.text_size[0] * self.char_width) / 2) blank_height = int((self.raw_height - self.text_size[1] * self.char_height) / 2) for p_id in trange(len(self.char_video)): strs = [self.char_video[p_id][i * col : (i + 1) * col] for i in range(row)] im = Image.new("RGB", (self.raw_width, self.raw_height), (255, 255, 255)) dr = ImageDraw.Draw(im) for i, str in enumerate(strs): for j in range(len(str)): dr.text( ( blank_width + j * self.char_width, blank_height + i * self.char_height, ), str[j], font=self.font, fill="#000000", ) im.save(catalog + r"/pic_{}.jpg".format(p_id)) def jpg2video(self): print("正在将字符图像合成字符视频...") catalog = self.video_path.split(".")[0] images = os.listdir(catalog) images.sort(key=lambda x: int(re.findall(r"\d+", x)[0])) im = Image.open(catalog + "/" + images[0]) fourcc = cv2.VideoWriter_fourcc("m", "p", "4", "v") savedname = catalog.split("/")[-1] vw = cv2.VideoWriter(savedname + "_tmp.mp4", fourcc, self.fps, im.size) for image in tqdm(images): frame = cv2.imread(catalog + "/" + image) vw.write(frame) vw.release() shutil.rmtree(catalog) def merge_audio(self): print("正在将音频合成到字符视频中...") raw_video = VideoFileClip(self.video_path) char_video = VideoFileClip(self.video_path.split(".")[0] + "_tmp.mp4") audio = raw_video.audio video = char_video.set_audio(audio) video.write_videofile( self.video_path.split(".")[0] + f"_{self.clarity}.mp4", codec="libx264", audio_codec="aac", ) os.remove(self.video_path.split(".")[0] + "_tmp.mp4") def gen_video(self): self.video2str() self.str2fig() self.jpg2video() self.merge_audio() if __name__ == "__main__": video_path = input("输入视频文件路径:\n") clarity = input("输入清晰度(0~1, 直接回车使用默认值0):\n") or 0 v2char = V2Char(video_path, clarity) v2char.gen_video() |

以上就是用Python字符画出了一个谷爱凌的详细内容,更多关于Python字符画的资料请关注服务器之家其它相关文章!

原文链接:https://blog.csdn.net/God_WeiYang/article/details/122921891